Behavior-Driven Product Validation Framework for Early-Stage SaaS and FinTech Startups

Ivan Denysyuk

Abstract

Early-stage product decisions are frequently made before reliable market signals emerge. These decisions are often influenced by intuition, stakeholder pressure, selective feedback, and urgency rather than observable behavior.

This article presents a structured product validation framework designed to reduce uncertainty before committing development resources.

The framework prioritizes behavioral evidence over stated preference and supports one of three outcomes: Proceed. Pivot. Stop.

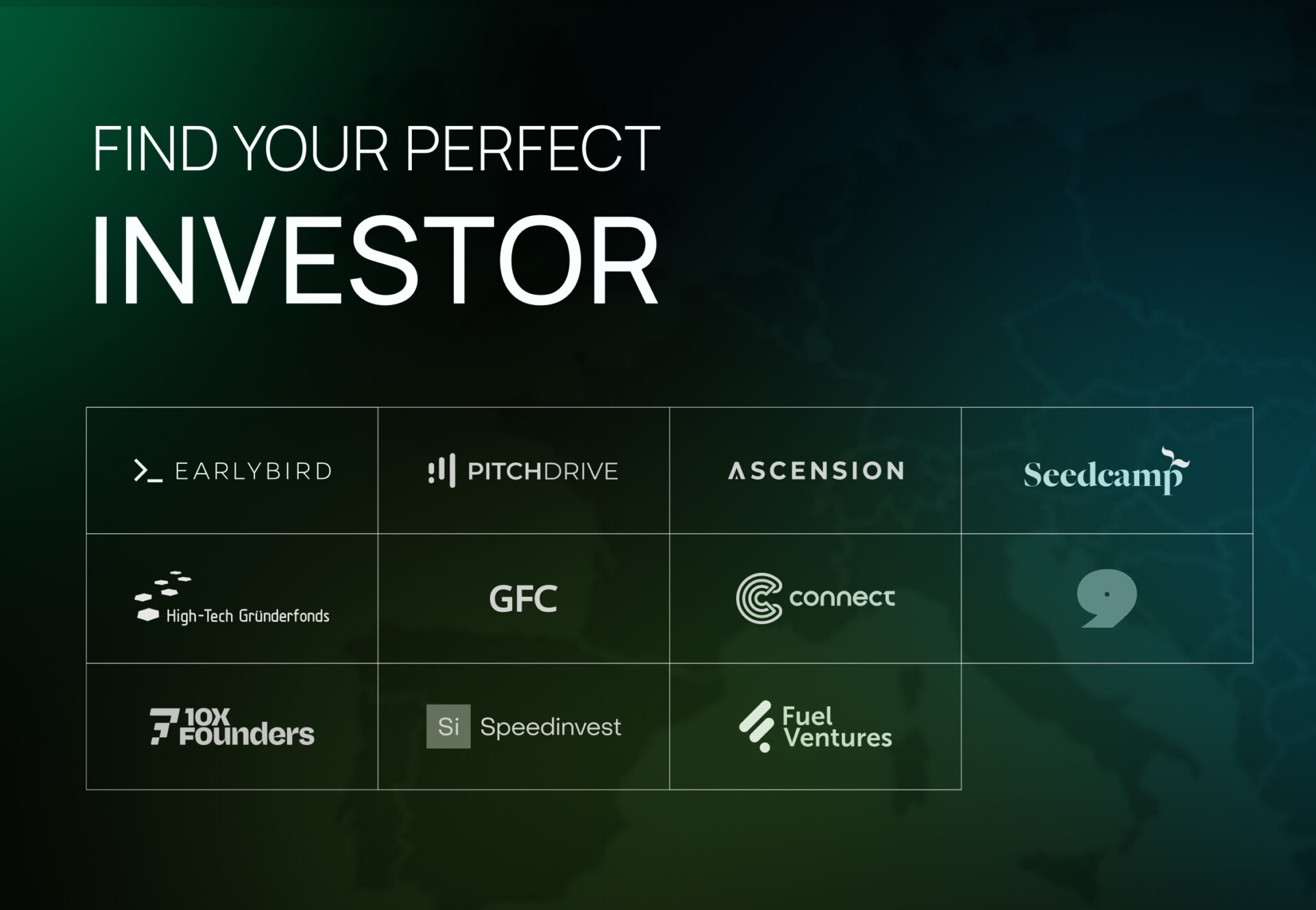

The methodology is particularly relevant for SaaS, FinTech, and B2B platforms operating under capital constraints and pre-product-market-fit conditions.

Why startup validation fails before MVP

Most early-stage startups don’t fail because the idea is bad. They fail because they run out of money while trying to align the product with what users actually want.

Common failure patterns include:

- MVP scope inflation driven by internal stakeholders

- Feature development without measurable adoption signals

- Running interviews without defining what success looks like

- Teams interpret feedback in a way that confirms what they already believe

- The product expands before users actually trust it

The underlying issue is a lack of explicit decision boundaries.

Without predefined failure criteria, almost any feedback can be interpreted as validation.

Core validation question

Before investing in full MVP development, the relevant strategic question is:

What is the smallest viable product interaction that users will understand, trust, and meaningfully engage with — given current capital and time constraints?

This reframes validation from ideation assessment to behavioral viability testing.

It shifts the focus from: “Is this idea appealing?”

to “Does this interaction produce measurable commitment?”

Framework Overview

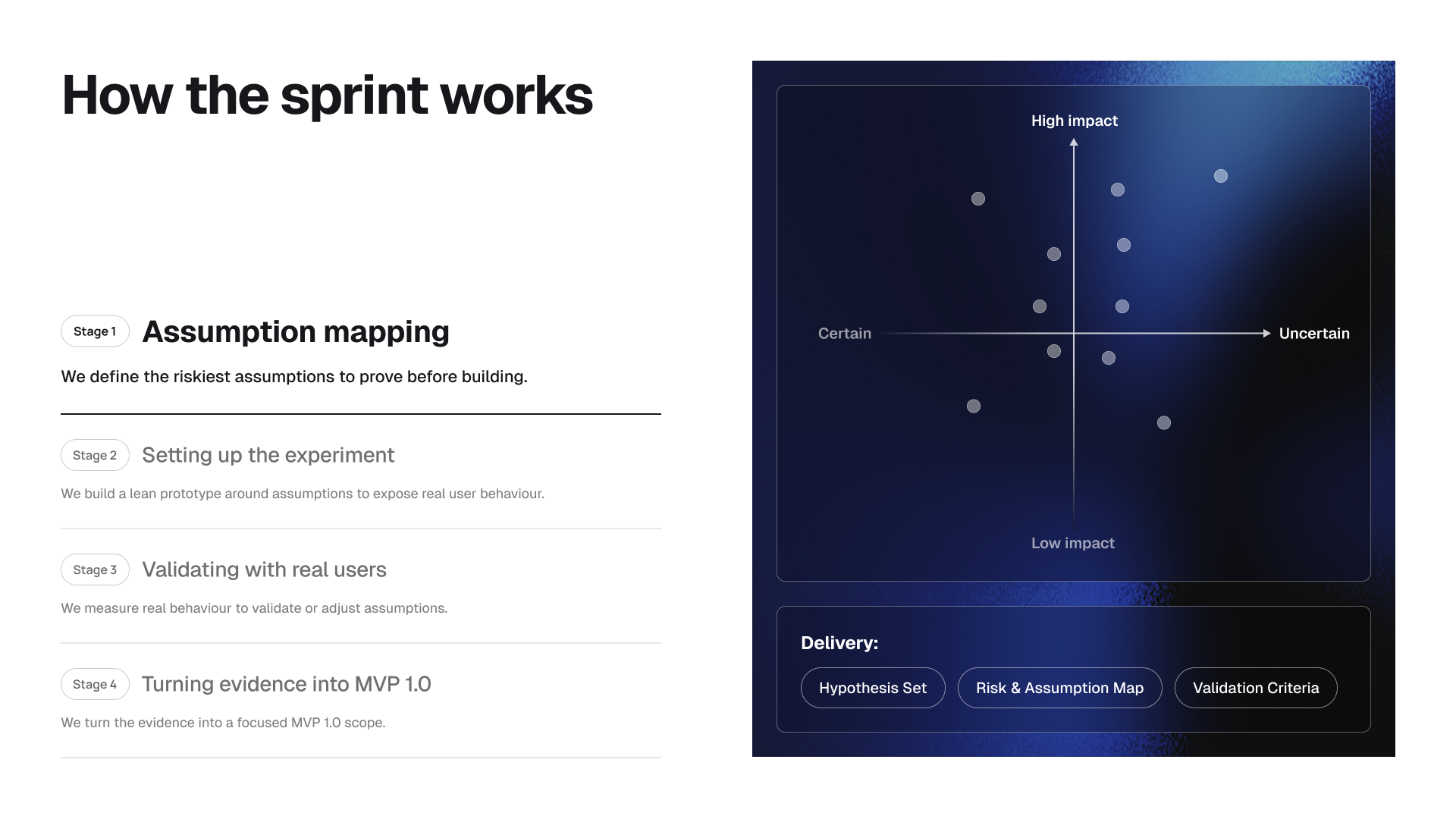

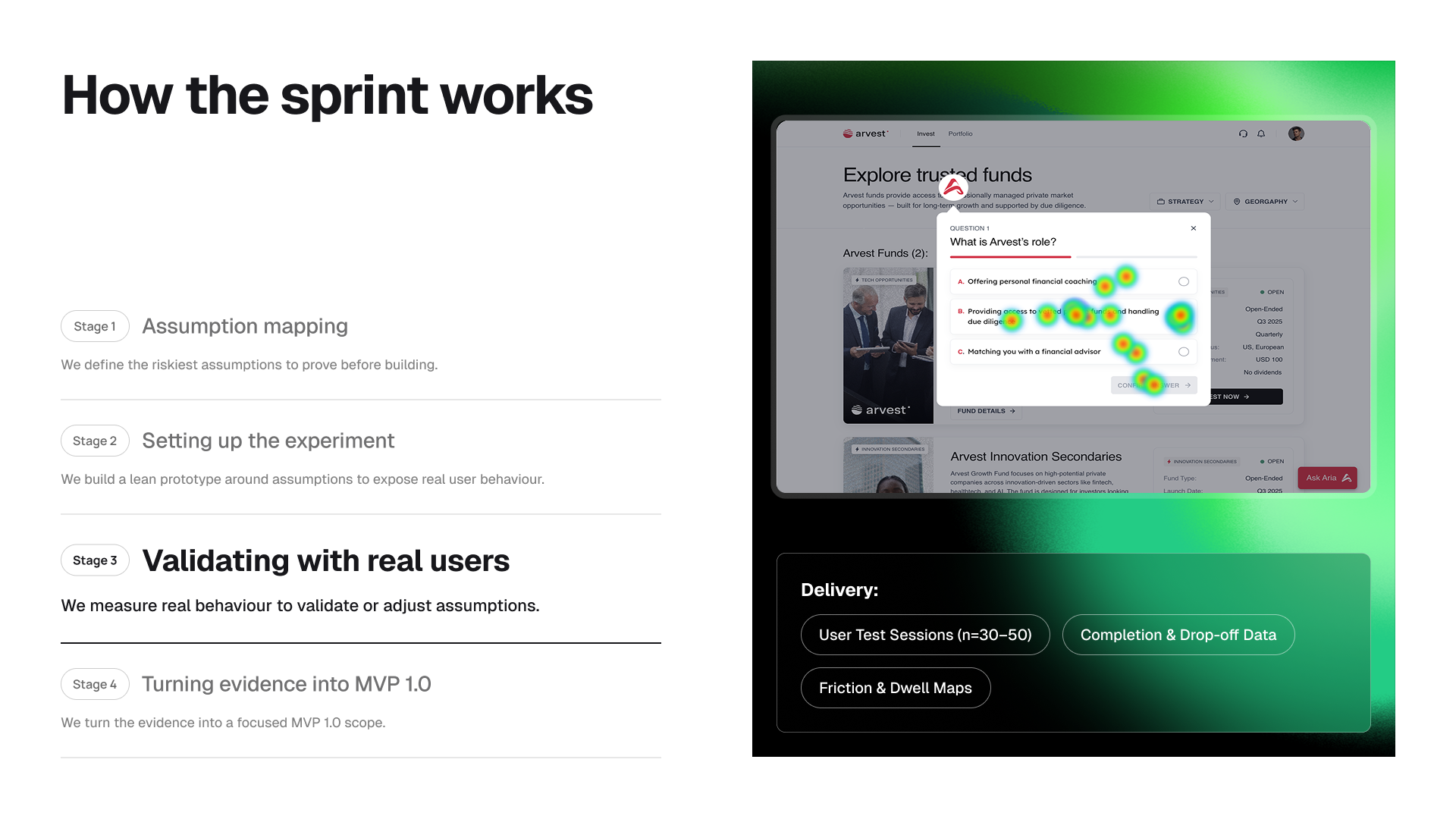

The framework runs 8–10 weeks and consists of four stages:

- Hypothesis & Assumption Mapping – Stage 1

- Minimum Viable Experiment Design – Stage 2

- Behavioral Testing – Stage 3

- Evidence-based MVP Definition & Roadmap – Stage 4

Each stage produces artifacts tied to explicit behavioral metrics.

Hypothesis & Assumption Mapping

All early-stage products rely on untested assumptions.

Examples:

-

The target user recognizes the problem.

-

The current workaround is insufficient.

-

The value proposition is understandable.

-

The flow generates sufficient trust to continue.

-

Users will commit time or capital.

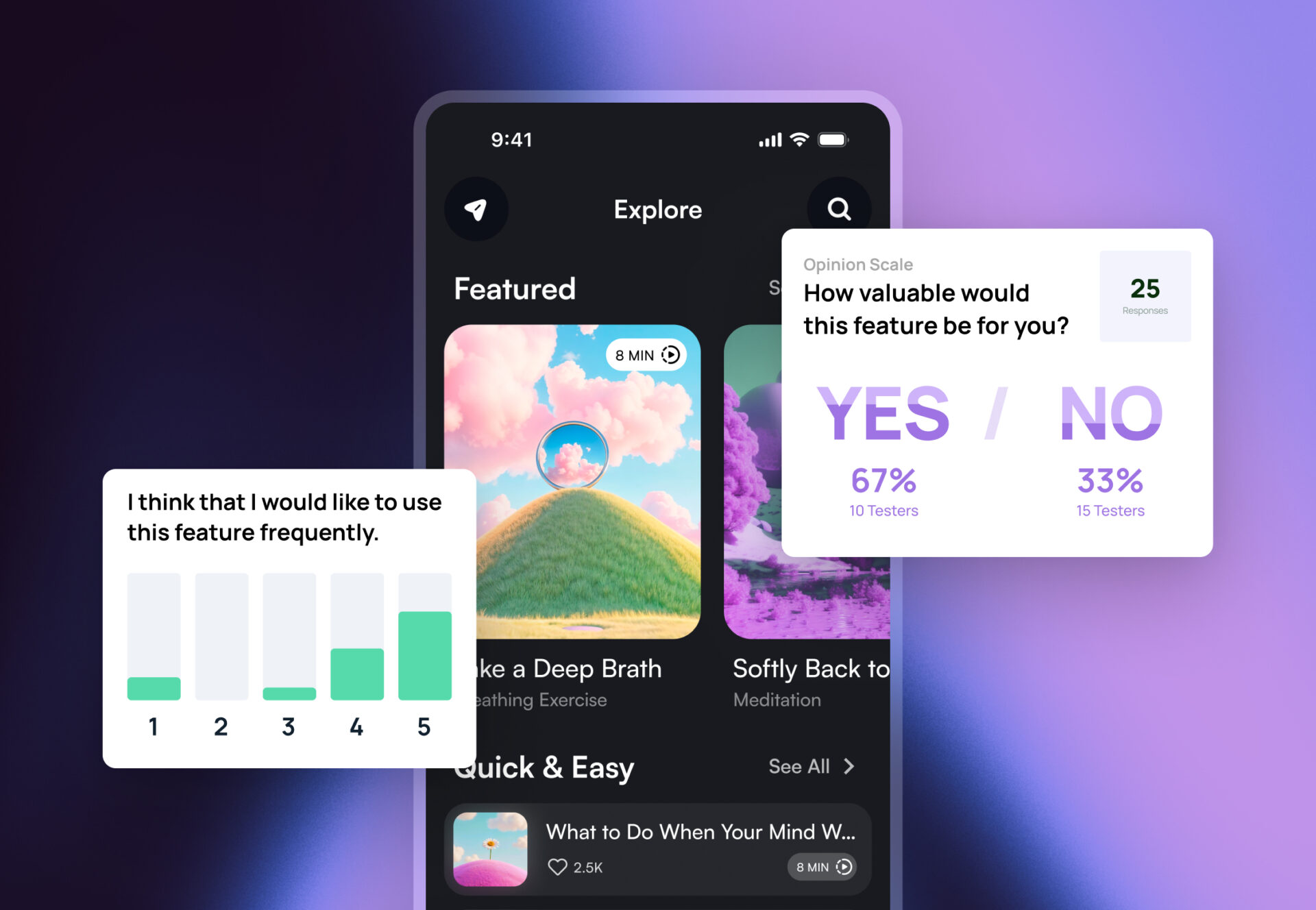

These assumptions are made explicit. For each assumption, we define:

-

observable behavioral indicators

-

success thresholds

-

pivot or stop boundaries

Example decision boundary:

“If fewer than 30% of target users demonstrate willingness to proceed beyond the core value interaction, the direction is considered invalid.”

Defining failure criteria before testing reduces confirmation bias.

Output: A structured Validation Plan including prioritized hypotheses, metrics, and explicit decision thresholds.

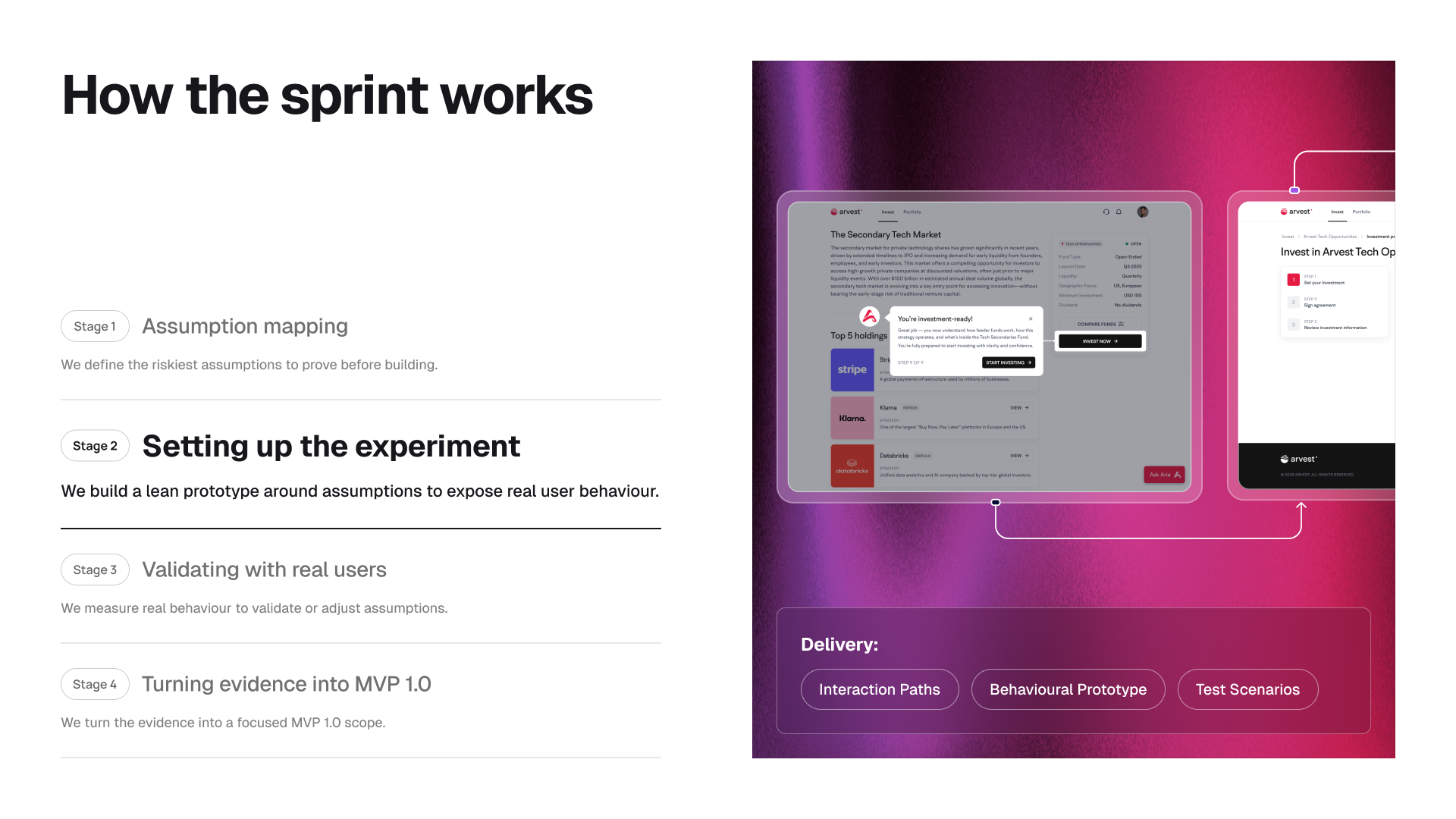

Setting up the experiment

Early validation requires a Minimum Viable Experiment.

The objective is exposure of behavior, not feature completeness.

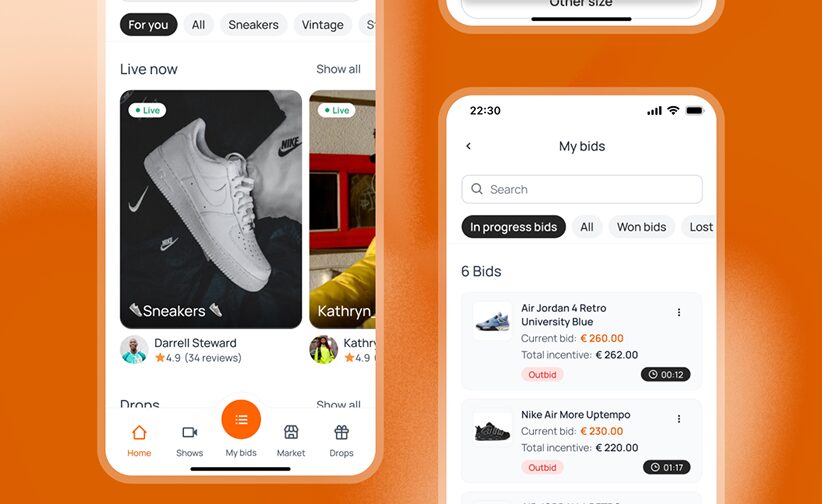

Common MVE implementations include:

-

High-fidelity interactive prototypes

-

Task-driven simulated onboarding flows

-

Controlled checkout or transaction simulations

-

Manual backend operations behind simplified interfaces

Instrumentation:

Behavioral tracking includes:

-

Completion rate

-

Drop-off points

-

Dwell time

-

Hesitation clusters

-

Backtracking frequency

-

Element interaction heatmaps

-

Explicit articulation of value

Only the minimum required to test the hypothesis is constructed.

This minimizes premature architectural commitment and sunk-cost escalation.

Output:

-

Instrumented prototype

-

Test protocol

-

Recruitment criteria

-

Data capture plan aligned with Stage 1 thresholds

Validating with real users

Testing Environment

Testing is primarily unmoderated to preserve natural interaction patterns. Moderated sessions are selectively added for interpretive depth.

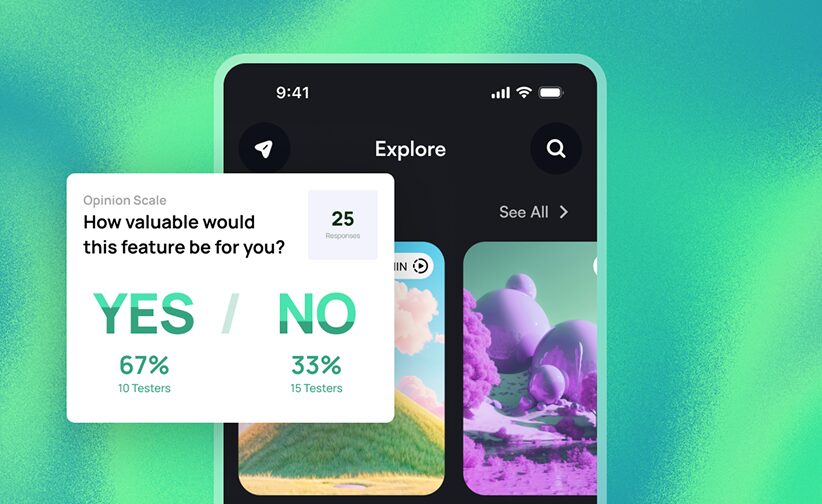

Behavioral Signals Prioritized

-

Task completion

-

Time-to-value

-

Friction clusters

-

Navigation loops

-

Confusion markers

-

Trust articulation

-

Misinterpretation frequency

Quantitative and qualitative data are analyzed in combination.

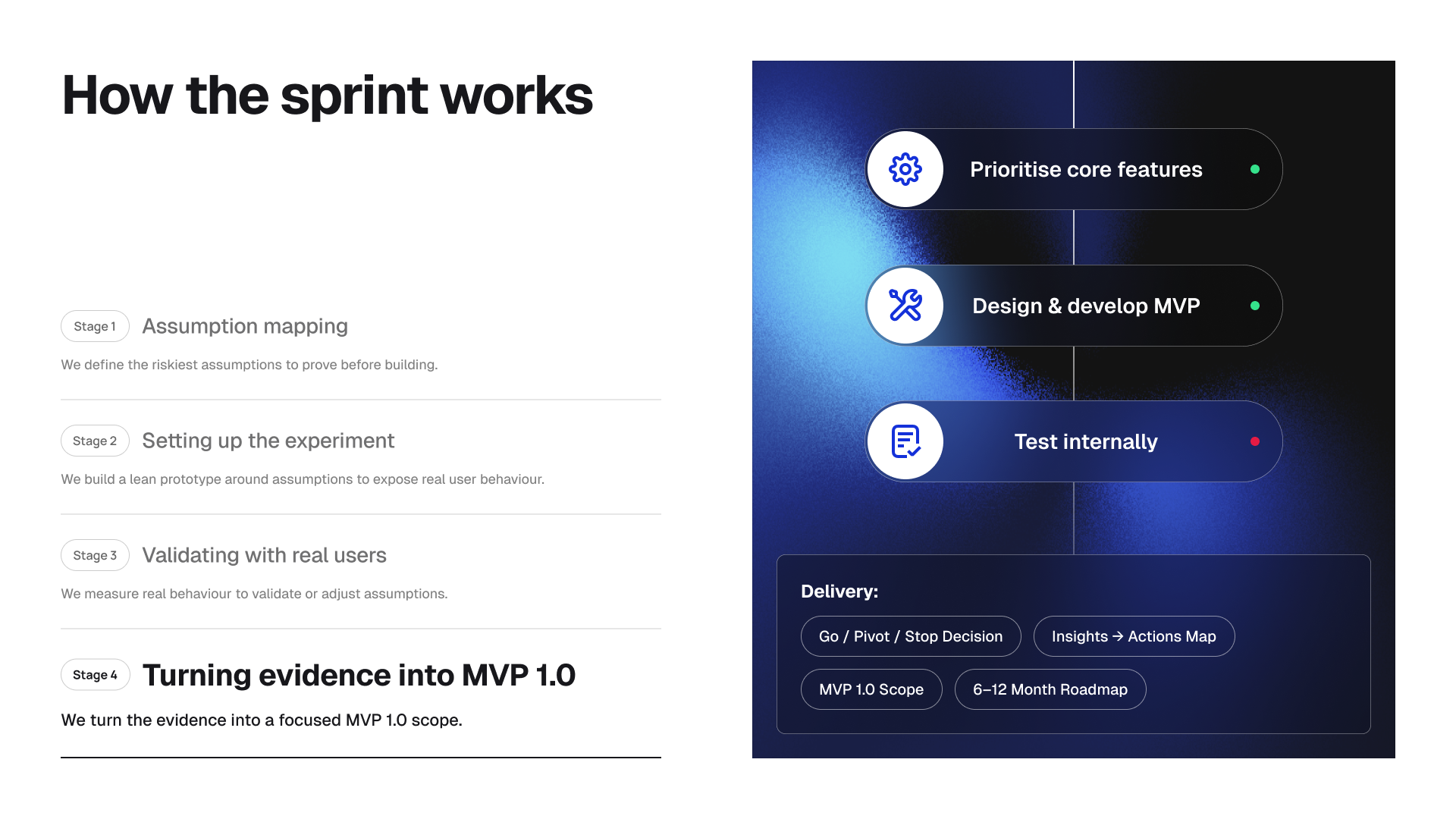

Turining evidence into MVP 1.0

Post-validation, development is sequenced based on:

-

Confirmed behavioral leverage points

-

Identified friction removal priorities

-

Validated trust-building components

This reduces:

-

Overbuilding before adoption proof

-

Roadmap inflation

-

Capital misallocation

-

Engineering waste

Live behavioral tracking continues post-launch to maintain decision discipline.

Limitations

The framework does not eliminate uncertainty.

Recognized limitations:

-

Limited cohort size

-

Early adopter bias

-

Short-term observational windows

-

Prototype fidelity constraints

-

Behavioral interpretation subjectivity

Transparency regarding these constraints prevents overconfidence.

Summary

Separating hypothesis from personality and evidence from opinion introduces operational discipline into early-stage product development.

The framework does not guarantee product-market fit.

It reduces the probability of engineering investment based on invalid assumptions.

For SaaS and FinTech startups operating under runway constraints, this distinction materially affects survival probability.